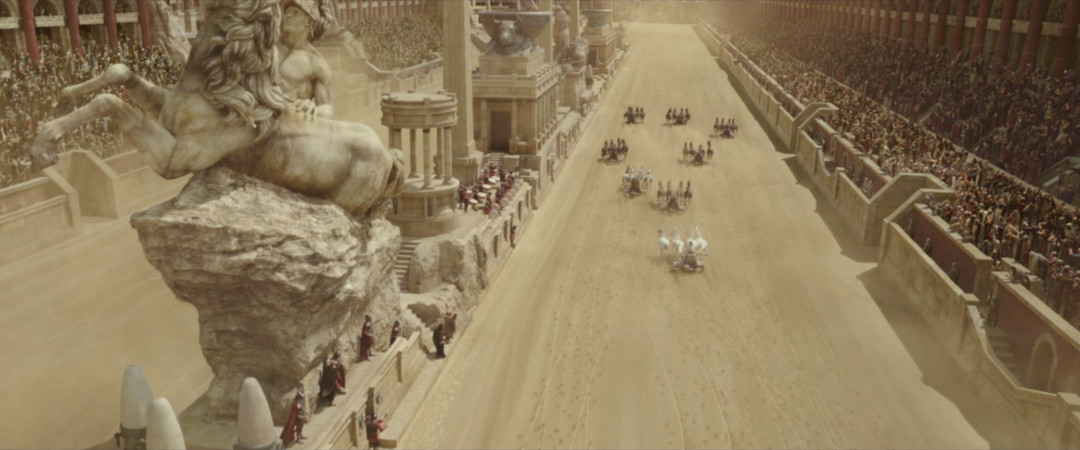

Massive Crowds in Ben Hur

MGM and Paramount's Ben Hur: A Tale of the Christ (2016) is the latest film adaptation of Lew Wallace's historical melodrama (first published in 1880). Jewish nobleman and prince Judah Ben Hur, is wrongly accused of treason and condemned to a slave ship by his adopted brother Messala. After years being held captive at sea, he finally escapes his shackles and returns to his homeland to seek revenge against his brother.

With the director advocating live action sequences whenever possible, the producers sought a visual effects team that was capable of integrating CG horses together with their real life counterparts. They awarded the project to Mr. X in Toronto Canada, who were not only responsible for the live-action integration, but also the biggest effects sequence in the entire film -- the epic life-or-death chariot race between Ben Hur and Messala at Circus Maximus. The CG Circus was created entirely in-house and populated with horses, chariots and riders, spectators, soldiers and drummers. The artists at Mr. X relied exclusively on Massive Software for the crowd simulations in this sequence, creating over 60,000 citizens and soldier agents.

"Some of the shots would include live action elements (e.g., Pilate, a small section of citizens and sections of the circus set etc), while other shots would be entirely CG, explains Mr. X Senior Crowd TD Simon Milner. This meant that each citizen would have to seamlessly integrate with the live action extras, as well as stand up to the highest quality on screen in their own right. We have amazingly talented artists in the studio and we needed our crowd to match the quality levels the other departments were delivering in their shots. To meet these quality demands, we needed to create our agents from scratch, to a level of detail I had not seen before on a stadium type agent."

Editor's Note - We are very grateful to Mr. X Senior Crowd TD Simon Milner for providing us with an in-depth look into the use of Massive for this film. His experiences with and opinions on Massive are expressed in the following questions and answers session.

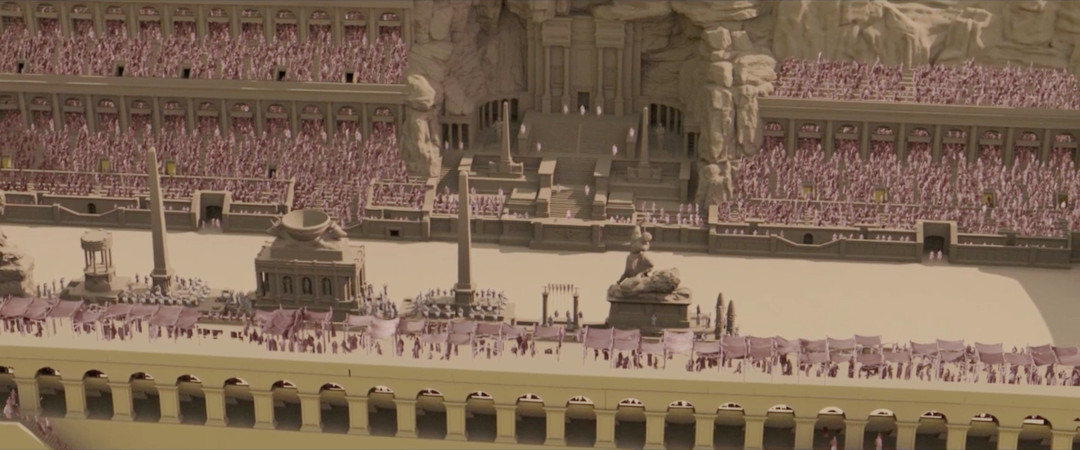

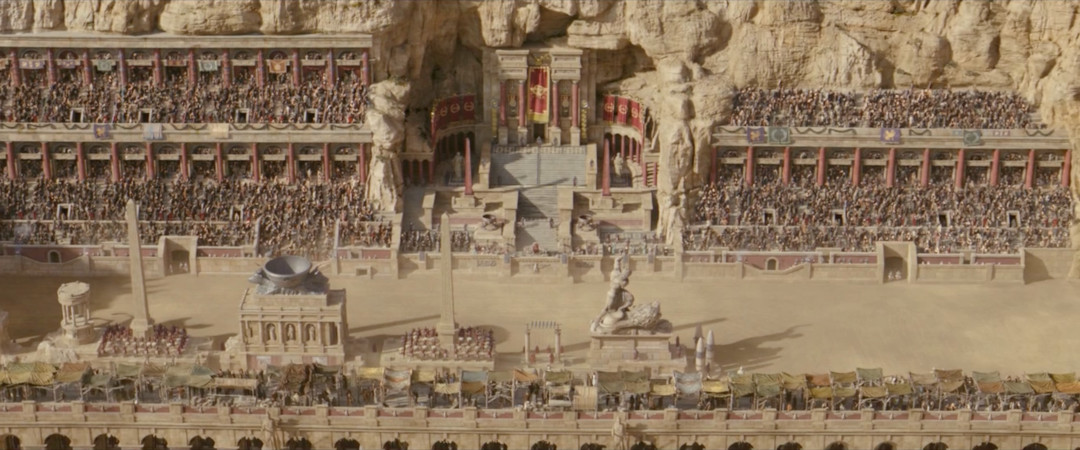

Over 60,000 soldiers and citzens were added to the circus by the crowd artists at Mr. X

What were your challenges for using Massive?

Adapting to frequent shot changes

"We knew this was going to be a big task, and it seemed to get even bigger as we progressed. The edit was frequently changing and this meant we had to be flexible and adapt to shot changes. Placing agents in Massive is a relatively straightforward process, given that there are a number of methods to actually generate the crowd positions, but we ended up making multiple revisions to the layout and placements as the show progressed. The director later required some surrounding cliffs to be cut away with extra seating built into the rocks, then an additional tier of seating was required around the entire circus arena to further enhance the spectacle. So this meant our placements needed to be reworked a few times to meet these changes in terrain geometry. We opted for spline placement of agents due to the irregular spacing and shapes of the different sections."

Achieving high quality looks for the Agents

"Another challenge was the high quality look we wanted to achieve for our crowd. This included both detailed body geometry (including fingers and opening mouths) as well as multiple layers of dynamic cloth for all the clothing variations of robes, scarfs, hoods and cloaks etc. Each agent had between 4 and 8 separate items of cloth which needed simulating, and this was a large hit to our sim times and agent development process. Because of the detail in our agents, we would have in some instances, about 100 sims running at the same time."

Each agent had between 4 and 8 separate items of cloth with variations of robes, scarfs, hoods, and cloaks, etc.

What were the major benefits from using Massive?

Handling large numbers of action

This is the number one reason we use Massive over other crowd systems. The ability to ingest hundreds of mocap clips and process/edit them smoothly and efficiently with brain/motion tree control is a huge benefit to using Massive. I could not imagine trying to work all the variations and performances we use in any other system, this is true for all the shows we use Massive on. We couldn't create the diverse types of agents (humans & creatures) we need in anything else as efficiently and with as much control.

Manipulating Agents through the entire pipeline

Another benefit for us are Massive's plain text files. We can take our cdl, sim & rib files and generate custom data to pass to Houdini for visualization and rendering. This gives us the ability to cull out and manipulate agents at a much later stage in the pipeline, including giving the lighters control over variations of individual agent looks.

Extensive brain control

Of course Massive's extensive brain control is something which helped us out a lot for achieving variations in performance and activity. We had very demanding clients (from our immediate internal supervisors all the way up) each of whom want the best possible crowd performance in every shot. This meant building agents which can avoid repeating actions, 'syncing' cycles with neighbors as well as giving natural behaviors.

Creating the chariot sequence

What was required to be delivered?

For the chariot sequence we delivered over 300 shots. Almost all of these required crowd to be present. The crowd would need to behave naturally and react to both each other and the action unfolding on the race-track (including crashes and overtakes)"

Mr. X was responsible for constructing the complete Circus environment and mountain landscape

How was it achieved?

"The agents were all created from scratch. We started off with two skeletons, for a male and a female, and then set about creating all the geometry variations (including hair and beards) Originally we planned to use interchangeable skinned geometry for wide/distant shots and cloth based clothing for closeup/hero shots. This didn't work out so well with the clothing types we needed so we reverted to simulating cloth for every agent in every shot.

Next we needed the animations, so we took our list of required actions to Ubisoft's motion capture stage in Toronto and spent a day getting hundreds of variations of sits, stands, claps and cheers. Quite often, when using professional mocap actors, we can look back at the data and immediately spot the actor who performed a series of actions. They are often very recognizable. We didnt want 'stand out' performances, so rather than use professional actors/stunt performers, we decided to use non-professionals to do the acting for the citizens. This gave us an extra level of realism to our crowd behavior; the recorded actions were natural and had a real-world feel to them.

Building the brain was always going to be more challenging than traditional stadium agents, due to the specific actions and reactions needed. The agents were designated a 'team' to support, via agent variables, which corresponded to one of the chariot riders. Each agent was then designed to look out for their rider and cheer, point and clap etc., whenever they were visible or involved in an event (overtake or crash etc) This gave the agents the ability to get excited for an overtake or boo for being overtaken, cheer for an opponent's crash or be shocked if their rider crashed out etc.

Each rider was animated by the animation department and passed as a proxy into Massive. These was then placed in the scene and would match the specific shot. The original idea was to have the entire race mapped out so that we could know, in any given shot, where each chariot would be on the track. This way we could have the crowd automatically look out for their team and react to whatever was happening during the race.

We used sound events to trigger both the actions/reactions (emitting certain frequencies to signal a crash) or just to provide the 'look at' functionality. When a chariot goes out of sight then the agent would revert to a default cheering/clapping mode for the other riders, but still reacting to opponent crashes etc. Using sound triggers for the major events ensured that the crowd could turn and look/point/gasp/cheer in reaction to that event."

What, if any, sequence/shot specific agent design/modification was done?

"We had quite a few shots where we knew the crowd would be deeply out of focus or were heavily motion blurred as the camera races around. For these shots we simulated 1000 frames of the full circus crowd with generic activity to be used in any shot from any camera angle. This also allowed us to quickly have crowd available to other departments and work out which shots required a customized performance."

The filmed race track was 657 feet long and built at Cinecitta World amusement park, near Rome

"Most of the time the modifications were on the overall activity of the crowd. The agents themselves were pretty sophisticated and pretty autonomous so we were able to focus on refining the excitement and energy levels. One of the first notes to come back for our highly energetic crowd was “it's not a Miley Cyrus concert!” So we went back and worked a lot on getting the energy levels right.

One way to achieve this was to use our own full body motion-capture suit (from Xsens) We could now get a performance note in morning dailies, put the suit on, capture some new actions and process them into Massive and re-sim – sometimes we were able to get a revised version into dailies that afternoon. This is something we hadn't had the luxury of before, it could take weeks to schedule another capture session at a stage.

One specific shot this really came into its own, was when a horse jumps the wall and into the crowd. We were able to do many takes of reacting to the horse bolting up the steps until we got it right in the shot."

One way to achieve this was to use our own full body motion-capture suit (from Xsens) We could now get a performance note in morning dailies, put the suit on, capture some new actions and process them into Massive and re-sim – sometimes we were able to get a revised version into dailies that afternoon. This is something we hadn't had the luxury of before, it could take weeks to schedule another capture session at a stage.

One specific shot this really came into its own, was when a horse jumps the wall and into the crowd. We were able to do many takes of reacting to the horse bolting up the steps until we got it right in the shot."

What did using Massive bring to this shot?

"One difference between the optical mocap data we'd get from Ubisoft, and what we were able to capture ourselves, was that Ubisoft would provide us with finger animation. The Xsens suit doesn't offer finger tracking so all our internal captures had default splayed fingers with no animation. To address this, some brain logic was built in Massive to procedurally animate the fingers into various poses to match the animation currently playing, i.e. pointing, a fist for cheering or coughing, cupping the mouth for shouting etc. Massive would work out what animation was playing and blend the fingers into the right pose at the right time, for each hand, reverting to a natural pose at other times.

This worked so well that in the end we stripped out the finger animation curves from all the action clips and relied solely on Massive managing the finger poses. We used the same system to open & close the mouth for cheers, shouts, talking etc."

What feedback from supervisors did you get?

"Most of the feedback we would get was based on mood and energy levels. There were many notes asking to 'increase the energy' or 'calm them down' or 'lose the Miley Cyrus fans'

Some shots required a lot of work to match to a different reference shot. Even though it was the same agent with the same actions, from different camera angles it just looked wrong. So we would need to make the agent match the reference 'feel' rather than re-use the same agent settings (or same sim). What seemed to be identical performances on screen were actually entirely different.

Other feedback was related to particular captures which were jarring or very noticeable, such as calling out repeating actions or 'no double hand cheers' These were notes which lessened with each shot revision as we were constantly adding to the library of available actions with our mocap suit, thus reducing the possibility of duplicate actions appearing."

Did anything interesting or funny happen while working on this sequence?

"Once we thought we had wrapped on the feature, we were given the opportunity to create a VR experience for the promotion of the movie (Ben-Hur | 360 Video | Paramount Pictures International). This would be a 360 degree camera running around 2 mins of the chariot race, looking from Judah's position. We had to simulate almost 3000 frames of crowd. We used the exact same crowd agent as in the feature (including cloth) at the same level of detail. But unlike the feature we had to sim & render the entire crowd for every frame. Using one of our power workstations, the VR sequence took 8 days to simulate, after a week we were all getting really nervous about power outages or system errors leaving us with little time to re-do the sim if something went wrong. Thankfully Massive didn't miss a beat and we were able to deliver a breathtaking POV look at the chariot race, and fully functioning crowd from any angle the viewer decides to look."