The Mill goes with Massive for Sky Sports

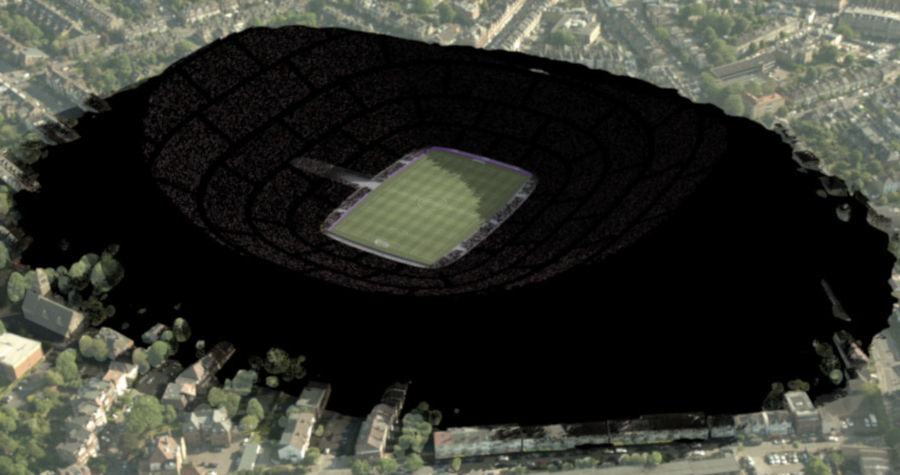

Filling stadiums with computer-generated crowds has become somewhat of a mainstay for football commercials in the past few years. But in a recent spot called 'Take Your Seat' for Sky Sports, visual effects studio The Mill in London brought a new twist into the fold.

The commercial features thousands of viewers collecting any kind of seat fitting they can find - from lounge chairs to mattresses and barber stools - and running to a stadium to catch a football game - watch The Mill's vfx breakdown and the final commercial.

Agency adam&eveDDB and director Sam Brown (via Rogue Films) called on The Mill to generate several CG crowd scenes in Massive for the spot, culminating in a mountain of audience members perched in these random set of seats. It was a task that took full advantage of the different behaviors possible in the software to show the stadium filling up to the brim.

© 2018 The Mill. All Rights Reserved

Choosing Massive for all the types of crowd behavior

"There were quite a few different crowd situations required to cover in the commercial,” says The Mill's Massive supervisor Edward Hicks. "The locomotive behavior included running through streets, carrying furniture solo, carrying furniture in pairs, dense crowd avoidance, obstacle avoidance, uneven terrain, carrying chairs along stairways and aisles, and, finally, climbing up a procedurally generated mountain of sofas."

Hicks says Massive was chosen for the commercial because of its quality of animation and behavioral control. "By starting with a base layer of actions connected logically in a motion tree, and with a robust limb IK planting system, we could have the same characters realistically navigating all kinds of terrain with minimal per-shot setup," notes Hicks. "Added on top of this system was a means of controlling the agent procedurally using channel offsets, and by making use of various agent-centric sensory channels, we could handle believable motion in complex situations. This is something I have yet to see done well in other crowd packages. Getting agents to convincingly climb over procedurally generated terrain is not a straightforward task."

Approaching the motion

The spot required a number of different types of crowd animation. Sometimes, a VFX studio might be able to reach into their existing animation libraries to feed into Massive agents, but here The Mill needed a few extra bespoke locomotions. That necessitated a motion capture shoot, which was conducted at Centroid in Pinewood Studios. Here, stunt performers were captured going through the actions of running across parks, climbing stadium areas and carrying seats.

The next step was taking in the motion capture data and, as Hicks describes, 'slicing and tagging it'. "Takes such as RUN_COUCH might be broken up into the following actions: standCouch (loop), standCouch_to_runCouch (transition), runCouch (loop) and runCouch_to_standCouch (transition)."

"These actions formed the building blocks of a motion tree in Massive," adds Hicks, "and could be linked together to provide the brain with a path through available animations with which to navigate the world they found themselves in."

Behavior controls were then built in Massive to tell the agents where to go and what to do. "This generally took the form of 'try to get over here', 'try to avoid obstacles', and 'try to avoid people,'" says Hicks. "It’s here where you add controls to deal with your specific requirements. An important consideration in behavior design is how the demands of the A.I. overlap, and how you prioritize and resolve conflicts."

Hicks also notes that the look could be developed in parallel to the behavior, and merged at any time. Indeed, the spot called for all kinds of football team kits to be represented, which lookdev artists generated while Hicks himself focused on the behavior and motion.

When the characters were ready, they were then loaded into shots. Says Hicks: "I tend to do this from a centralized location rather than fully duplicating the asset into shots. This keeps things easy to maintain. You can control the agents with scene variables that allow the same version of the asset to behave differently."

© 2018 The Mill. All Rights Reserved

A breakdown of behaviors

To achieve the desired behaviors of the seat-stealing crowd members, Hicks' approach was to start with a base of motion capture and then add procedural control on top. "For example," he says, "if a scene called for someone to walk along holding a chair, it was better and easier to simply capture mocap of someone carrying a chair, but you can start with a simple walk in a pinch. However, it's possible to create a lot of variation for free with just one take by adding animation on top of the arms, spine and head."

The climbing behavior seen in the spot was approached in this way. The starting point was a generic climbing animation that resembled a crawl over inclined terrain. Once that was established, the agent would then need to find places to grab hold with hands and feet.

But, admits Hicks, "this was harder than it sounds! The targets needed to be identifiable, near enough to reach without fully extending the limb, and have no obstacles between the limb and the goal. As the terrain was procedurally generated, the terrain markup needed to be procedural too. Using the same technology that generated the stadium seating data, I generated the furniture as agents that could more directly communicate with my climbers about where to go."

“Once that was settled," continues Hicks, “the limbs needed to know when to grab hold and when to - smoothly - let go. There's so much that can go wrong, and when you add avoidance and prop carrying on top of that, it can be a real headache! The climbing solution was by far the most difficult behavior to get working."

Another complex behavioral task was getting pairs of agents to carry a sofa between them. The solution was to spawn two agents on the same spot, who would find each other by proximity, and then be set to agree amongst themselves via their ID which was the leader and which was the follower.

"They would then separate, face one another, and the leader would spawn a sofa between them," describes Hicks. "They would also each spawn an invisible 'handle' utility agent, which would tell the sofa agent where the leader and follower wanted to hold their end of the sofa. Generally this was a little forward of their hips, but it could be moved independently. The sofa agent would align between these handles, and then the human agents would be free to reach out with their arms and grab the sides of the sofa."

Hicks says that so long as the agents stayed within reaching range, the action looked suitably natural, especially when paired with sofa-carrying motion capture data that had been acquired earlier. The final piece of the puzzle involved adding behavior to the follower to try to remain in front of the leader. “This meant the leader could execute turns and walk over inclined terrain without requiring more motion data," says Hicks. "Most other potential solutions to this task would have to compromise at this point, but it's possible with Massive."

© 2018 The Mill. All Rights Reserved

Where Massive fits in The Mill pipeline

The Mill typically renders Massive crowds with Arnold via Maya. The workflow on the 'Take Your Seat' spot involved generating a sim of .apf files and .ass Arnold archives, which were loaded into Maya as a procedural. At render time, these procedurals were expanded out to sit in the lighting scene, a process designed to keep the scene and simulation sizes manageable.

Meanwhile, the stadium itself was procedurally built in Houdini. "The advantage of his," states Hicks, "was that the client could at any time drastically change the dimensions to fit their needs."

"Still," warned Hicks, "rapid drastic changes can be a double edged sword. We built a placement exporter to smooth this process a bit, adding an output in the Houdini stadium generator scene that would create .mas files with the placement perfectly matching whatever new geometry we had that day. Thanks to a very human-parsable fire format, it didn’t take long to write tools that can powerfully manipulate Massive scenes in code."

Sometimes Massive also came in handy in unexpected ways. As noted, agents were generated for each piece of furniture in the hill climbing shots. "They provided collision geometry," explains Hicks, "as well as useful information on sound channels and agent fields, which aided the climbing agents in traversing the geometry without sticky looking or hyperextended limbs and without obvious geometry intersections. Other than that, all the CG furniture items in the running shots prior to the stadium walls were Massive agents, or were Houdini elements driven by agent sim data exported from Massive."

© 2018 The Mill. All Rights Reserved

Big scenes on a commercial turnaround time

Reflecting on the amount of hours put into the crowds for the spot, Hicks notes that "Massive is heavy on setup, but light when it comes to shots. If the agent's behavior was ready, shots were usually pretty quick to run through. Each shot took a couple of days to get into a good place once the behavior was built."

The overhead shots in the park required some tweaking in terms of placement, density and speed, but the most variable and iterated shots involved the stadium. "The furniture per shot numbered in the multi-millions, and there were about 100,000 stadium agents, so it did tax the renderfarm at times," says Hicks.

Up front, the motion capture process lasted a couple of weeks from planning to integration. The basic agent set-up and look took about a week to do, while the sofa carrying development took a further week. Climbing development lasted a couple of weeks.

"As I was the only crowd operator on the job," says Hicks, "it was important to prioritize things that the client could see right away, giving me time to fine-tune the more complex behavior for later delivery. With a sensible schedule, good communication, a solid team, and with the trust of clients and CG leads, it was possible to create these ambitious crowd solutions with just one crowd operator and without compromising on quality or client satisfaction."